A Software Architecture for Precision Medicine

Intelligent systems in clinical care leverage the latest innovations in machine learning, real-time data stream mining, visual analytics, natural language processing, ontologies, production rule systems, and cloud computing to provide clinicians with the best knowledge and information at the point of care for effective clinical decision making. In this post, I propose a unified open reference architecture that combines all these technologies into a hybrid cognitive system for clinical decision support. Indeed, truly intelligent systems are capable of reasoning. The goal is not to replace clinicians, but instead to provide them with cognitive support during clinical decision making. Furthermore, Intelligent Personal Assistants (IPAs) such as Apple's Siri, Google's Google Now, and Microsoft's Cortana have raised our expectations on how intelligent systems interact with users through voice and natural language.

In the strict sense of the term, a reference architecture should be abstracted away from concrete technology implementation. However in order to enable a better understanding of the proposed approach, I take liberty in explaining how available open source software can be used to realize the intent of the architecture. There is an urgent need for an open and interoperable architecture which can be deployed across devices and platforms. Unfortunately, this is not the case today with solutions like Apple's HealthKit and ResearchKit.

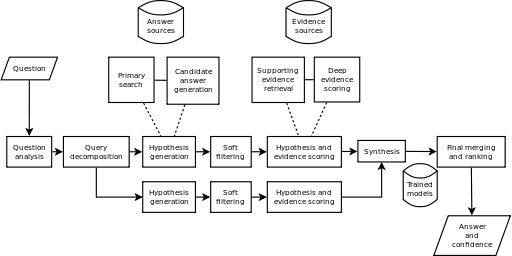

The specific open source software mentioned in this post can be substituted with other tools which provide similar capabilities. The following diagram is a depiction of the architecture (click to enlarge).

Clinical Data Sources

Clinical data sources are represented on the left of the architecture diagram. Examples include electronic medical record systems (EMR) commonly used in routine clinical care, clinical genome databases, genome variant knowledge bases, medical imaging databases, data from medical devices and wearable sensors, and unstructured data sources such as biomedical literature databases. The approach implements the Lambda Architecture enabling both batch and real-time data stream processing and mining.

Predictive Modeling, Real-Time Data Stream Mining, and Big Data Genomics

The back-end provides various tools and frameworks for advanced analytics and decision management. The analytics workbench includes tools for creating predictive models and data streaming mining. The decision management workbench includes a production rule system (providing seamless integration with clinical events and processes) and an ontology editor.

The incoming clinical data likely meet the Big Data criteria of volume, velocity, and variety (this is particularly true for physiological time series from wearable sensors). Therefore, specialized frameworks for large scale cluster computing like Apache Spark are used to analyze and process the data. Statistical computing and Machine Learning tools like R are used here as well. The goal is knowledge and patterns discovery using Machine Learning model builders like Decision Trees, k-Means Clustering, Logistic Regression, Support Vector Machines (SVMs), Bayesian Networks, Neural Networks, and the more recent Deep Learning techniques. The latter hold great promise in applications such as Natural Language Processing (NLP), medical image analysis, and speech recognition.

These Machine Learning algorithms can support diagnosis, prognosis, simulation, anomaly detection, care alerting, and care planning. For example, anomaly detection can be performed at scale using the k-means clustering machine learning algorithm in Apache Spark. In addition, Apache Spark allows the implementation of the Lambda Architecture and can also be used for genome Big Data analysis at scale.

In another post titled How Good is Your Crystal Ball?: Utility, Methodology, and Validity of Clinical Prediction Models, I discuss quantitative measures of performance for clinical prediction models.

Visual Analytics

Visual Analytics tools like D3.js, rCharts, ploty, googleVis, ggplot2, and ggvis can help obtain deep insight for effective understanding, reasoning, and decision making through the visual exploration of massive, complex, and often ambiguous data. Of particular interest is Visual Analytics of real-time data streams like physiological time series. As a multidisciplinary field, Visual Analytics combines several disciplines such as human perception and cognition, interactive graphic design, statistical computing, data mining, spatio-temporal data analysis, and even Art. For example, similar to Minard's map of the Russian Campaign of 1812-1813 (see graphic below), Visual Analytics can help in comparing different interventions and care pathways and their respective clinical outcomes over a certain period of time by displaying causes, variables, comparisons, and explanations.

Production Rule System, Ontology Reasoning, and NLP

The architecture also includes a production rule engine and an ontology editor (Drools and Protégé respectively). This is done in order to leverage existing clinical domain knowledge available from clinical practice guidelines (CPGs) and biomedical ontologies like SNOMED CT. This approach complements machine learning algorithms' probabilistic approach to clinical decision making under uncertainty. The production rule system can translate CPGs into executable rules which are fully integrated with clinical processes (workflows) and events. The ontologies can provide automated reasoning capabilities for decision support.

NLP includes capabilities such as:

- Text classification, text clustering, document and passage retrieval, text summarization, and more advanced clinical question answering (CQA) capabilities which can be useful for satisfying clinicians' information needs at the point of care; and

- Named entity recognition (NER) for extracting concepts from clinical notes.

Clinical Decision Services

The clinical decision services provide intelligence at the point of care typically using deployed predictive models, clinical rules, text mining outputs, and ontology reasoners. For example, Machine Learning algorithms can be exported in predictive markup language (PMML) format for run-time scoring based on the clinical data of individual patients, enabling what is referred to as Personalized Medicine. Clinical decision services include:

- Diagnosis and prognosis

- Simulation

- Anomaly detection

- Data visualization

- Information retrieval (e.g., clinical question answering)

- Alerts and reminders

- Support for care planning processes.

Intelligent Personal Assistants (IPAs)

Clinical decision services can also be delivered to patients and clinicians through IPAs. IPAs can accept inputs in the form of voice, images, and user's context and respond in natural language. IPAs are also expanding to wearable technologies such as smart watches and glasses. The precision of speech recognition, natural language processing, and computer vision is improving rapidly with the adoption of Deep Learning techniques and tools. Accelerated hardware technologies like GPUs and FPGAs are improving the performance and reducing the cost of deploying these systems at scale.

Hexagonal, Reactive, and Secure Architecture

Intelligent Health IT systems are not just capable of discovering knowledge and patterns in data. They are also scalable, resilient, responsive, and secure. To achieve these objectives, several architectural patterns have emerged during the last few years:

- Domain Driven Design (DDD) puts the emphasis on the core domain and domain logic and recommends a layered architecture (typically user interface, application, domain, and infrastructure) with each layer having well defined responsibilities and interfaces for interacting with other layers. Models exist within "bounded contexts". These "bounded contexts" communicate with each other typically through messaging and web services using HL7 standards for interoperability.

- The Hexagonal Architecture defines "ports and adapters" as a way to design, develop, and test an application in a way that is independent of the various clients, devices, transport protocols (HTTP, REST, SOAP, MQTT, etc.), and even databases that could be used to consume its services in the future. This is particularly important in the era of the Internet of Things in healthcare.

- Microservices consist in decomposing large monolithic applications into smaller services following good old principles of service-oriented design and single responsibility to achieve modularity, maintainability, scalability, and ease of deployment (for example, using Docker).

- CQRS/ES: Command Query Responsibility Segregation (CQRS) and Event Sourcing (ES) are two architectural patterns which consist in the use of event-driven messaging and an Event Store for separating commands (write-side) from queries (read-side) relying on the principle of Eventual Consistency. CQRS/ES can be implemented in combination with microservices to deliver new capabilities such as temporal queries, behavioral analysis, complex audit logs, and real-time notifications and alerts.

- Functional Programming: Functional Programming languages like Scala have several benefits that are particularly important for applying Machine Learning algorithms on large data sets. Like functions in mathematics, functions in Scala have no side effects. This provides referential transparency. Machine Learning algorithms are in fact based on Linear Algebra and Calculus. Scala supports high-order functions as well. Variables are immutable witch greatly simplifies concurrency. For all those reasons, Machine Learning libraries like Apache Mahout have embraced Scala, moving away from the Java MapReduce paradigm.

- Reactive Architecture: The Reactive Manifesto makes the case for a new breed of applications called "Reactive Applications". According to the manifesto, the Reactive Application architecture allows developers to build "systems that are event-driven, scalable, resilient, and responsive." Leading frameworks that support Reactive Programming include Akka and RxJava. The latter is a library for composing asynchronous and event-based programs using observable sequences. RxJava is a Java port (with a Scala adaptor) of the original Rx (Reactive Extensions) for .NET created by Erik Meijer.

Based on the Actor Model and built in Scala, Akka is a framework for building highly concurrent, asynchronous, distributed, and fault tolerant event-driven applications on the JVM. Akka offers location transparency, fault tolerance, asynchronous message passing, and a non-deterministic share-nothing architecture. Akka Cluster provides a fault-tolerant decentralized peer-to-peer based cluster membership service with no single point of failure or single point of bottleneck.

Also built with Scala, Apache Kafka is a scalable message broker which provides high-throughput, fault-tolerance, built-in partitioning, and replication for processing real-time data streams. In the reference architecture, the ingestion layer is implemented with Akka and Apache Kafka.

- Web Application Security: special attention is given to security across all layers, notably the proper implementation of authentication, authorization, encryption, and audit logging. The implementation of security is also driven by deep knowledge of application security patterns, threat modeling, and enforcing security best practices (e.g., OWASP Top Ten and CWE/SANS Top 25 Most Dangerous Software Errors) as part of the continuous delivery process.

An Interface that Works across Devices and Platforms

The front-end uses a Mobile First approach and a Single Page Application (SPA) architecture with Javascript-based frameworks like AngularJS to create very responsive user experiences. It also allows us to bring the following software engineering best practices to the front-end:

- Dependency Injection

- Test-Driven Development (Jasmine, Karma, PhantomJS)

- Package Management (Bower or npm)

- Build system and Continuous Integration (Grunt or Gulp.js)

- Static Code Analysis (JSLint and JSHint), and

- End-to-End Testing (Protractor).

Interoperability

Interoperability will always be a key requirement in clinical systems. Interoperability is needed between all players in the healthcare ecosystem including providers, payers, labs, knowledge artifact developers, quality measure developers, and public health agencies like the CDC. These standards exist today and are implementation-ready. However, only health IT buyers have the leverage to demand interoperability from their vendors.

Standards related to clinical decision support (CDS) include:

- The HL7 Fast Healthcare Interoperability Resources (FHIR)

- The HL7 virtual Medical Record (vMR)

- The HL7 Decision Support Services (DSS) specification

- The HL7 CDS Knowledge Artifact specification

- The DMG Predictive Model Markup Language (PMML) specification.